Using Docker for full stack local development with Node.js and Postgres - Part 2

June 30, 2020

This is part 2 of a 2 part post about Docker for web development.

- Part 1: An intro to Docker and managing your own containers

- Part 2: Using Docker for full stack local development with Node.js and Postgres - [you are here]

Intro

My previous Docker post was an introduction to what Docker is, and how it can be used to package an application in to a Docker image for sharing. Although my previous post covered essential knowledge for Docker, it doesn’t cover what I think is it’s most useful and practical purpose which is to enable developers to run their apps using Docker as a development environment. This post continues to explain why this is useful, and how to implement it to help with your own development process.

Specifically, this post covers:

- Why use Docker for development?

- Using Docker Compose

- Networks and Volumes with Docker Compose

- Problems with dependencies and local development inside containers

Also a prerequisite here is to have Docker Desktop installed on your host machine, and have read my previous Docker post for an understanding of the differences between the uses of Docker.

Why use Docker for development?

The benefits of Docker discussed in this post are simple - it allows an application to be ran using one or more containers which each have their own software versions specified. For example, you can run a specific version of Linux, using a specific version of Node. This is great for sharing because you’ll be sure that it will run on other users machines.

But this advantage of specifying software and versions is also ideal for the development process. I’ve spent countless hours trying to run old side projects from a couple of years ago and encountering problems where a certain NPM package or function doesn’t work anymore because my machine has been updated. Docker as a dev environment allows an application to be ran exactly as it was created, meaning you can jump right back in to a project and not have to worry about those dreadful issues.

Another advantage is that with Docker being host machine agnostic, you actually don’t need some software installed on your host machine at all. You can completely uninstall Ruby or Go or Node and your app will run. Similarly, it’s much easier to play with other tech stacks as you don’t need to worry about maintaining all the baggage on your host machine.

The implementation

For the purposes of this post, I’ll be showing how I updated on of my Github projects to use Docker for development recently. This project is simple, consisting of a front end React app (built with Next.js), a server side Node API, and a Postgres database. With this setup I wanted to have 3 separate containers:

- One for the Next.js React app

- One for the server side Node API

- One for the Postgres database

This will be done using a Dockerfile and Docker Compose. The Dockerfile defines the setup for the local environment and provides a way to package the app up to eventually be production ready, and Docker Compose is used to orchestrate the containers together and allow them to communicate between one another.

Docker Compose makes use of Services, Networks and Volumes to allow containers to work together on one machine. The same end result can be achieved without Docker Compose, and instead using Docker CLI and creating the setup manually, but Docker Compose makes it much faster and easier to get your perfect dev environment ready so you can focus on the developing! Services, Networks and Volumes will be covered more later.

Using Docker for development

For some context before we jump in, here’s my desired project structure for the boilerplate with Docker Compose:

- /api

|- Dockerfile

|- package.json

|- ... (other files in the Node API)

- /client

|- Dockerfile

|- package.json

|- ... (other files in the Next.js client)

- docker-compose.yml

- README.mdTo get started, a Dockerfile is needed for each of the front end and back end parts of the app. Here’s the front end:

# Dockerfile

FROM node:12.12.0-alpine

WORKDIR /home/app/client

EXPOSE 3000And here’s the back end one:

# Dockerfile

FROM node:12.12.0-alpine

WORKDIR /home/app/api

EXPOSE 3001I’ve ran in to problems before specifying the Node version to be “node-latest”, or similar which define the latest version of Node to be used. I recommend specifying a version as that’s kind of the whole point of using Docker this way - keeping the versions and everything exactly what it should be to run your app.

You’ll notice the Dockerfiles are very simple compared to the ones in the last post. This is because the last post’s Dockerfiles were set to package the app up for use to run the application, not for development of the application.

The Dockerfles are set to perform tasks:

- FROM - install a specific version of Node (12.12.0) using the Node Alpine which is a leaner version of Node taking up much less space than the full version.

- WORKDIR - specify where you want the app to be ran inside the container.

- EXPOSE - expose a port for the app to be accessed on your local machine. More on this later.

The Dockerfiles in the last post also contained instructions to COPY files over from the host machine to the container, and RUN the command to actually start the app. But again, the instructions in the last post were for packaging the app to be ran, not used in development.

Similarly, we can’t use the above, shorter Dockerfile using the same docker run... CLI command as before. Instead we can use a docker-compose.yml file to run our app:

# docker-compose.yml

version: "3"

services:

client_dev:

build: ./client

command: sh -c "npm install && npm run dev"

ports:

- 8000:3000

working_dir: /home/app/client

volumes:

- ./client:/home/app/client

api_dev:

build: ./api

command: sh -c "npm run migrate-db && npm install && npm run dev"

ports:

- 8001:3001

working_dir: /home/app/api

volumes:

- ./api:/home/app/api

depends_on:

- db

db:

image: postgres

environment:

- POSTGRES_USER=user

- POSTGRES_DB=postgresdb

- POSTGRES_PASSWORD=password

- POSTGRES_HOST_AUTH_METHOD=trust

volumes:

- ./db/data/postgres:/var/lib/postgresql/data

ports:

- 5432:5432You can see each of the 3 services are defined in this YAML file - client_dev, api_dev and db. These define the 3 containers which Docker Compose will spin up. They can be called what ever you want, but these service names are used when you want to debug using Docker CLI.

For client_dev and api_dev, there’s a build property. This defines the location of the Dockerfile which Docker Compose uses to build your containers.

There’s also the command property, which defines a command which is to be ran once the containers are up and running. Once the container is running, we want to ensure the packages are installed from the package.json file, otherwise our app won’t work. You can run the npm install commands separately and manually before running the containers, but it’s good to have it here so people don’t need to enter commands themselves.

The ports property is similar to the port forwarding explained in the last post. It enables your host machine to access your containers posts on localhost. In our client app, the Next.js app is set to run on port 3000, and the Dockerfile EXPOSEs port 3000 to run the app. The ports section of the Compose file allows us to then access the app using port 8000. We could have the Compose file use the same ports for local and container, such as - 3000:3000 but you may have some ports in use and need to specify different ones for your machine.

The working_dir defines the directory for the Compose file to use to run the project. This is set to the same working directory as defined in the Dockerfile of course, as this is where the app code is located.

Finally, the volumes is what is required to define a Volume using the Compose file. As described on the Docker site, Volumes are a way “for persisting data generated by and used by Docker containers”. In our case, it means we want to use a Volume to keep the code of our app outside of the container, but have the code mounted so it can still be used by the container. I’ll explain this in more detail later.

Now the docker-compose.yml and each Dockerfile is in the right place, we can run docker-compose up which will spin up each of the 3 containers for us. But what’s actually happening when we run that command?

The docker-compose process explained

Once the Compose command is ran, Docker will check the location of the Dockerfiles you have defined in the build part of the Compose file. These Dockerfiles contain an image which the file requires, in our example is Node, so the Node image is downloaded for us. The db service in our Compose file also contains the Postgres database, which is downloaded without the use of a Dockerfile. Instead, this image is defined within the Compose file definition of image: postgres.

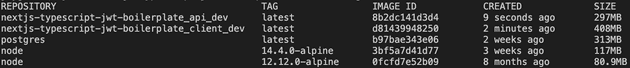

Once the images are downloaded, our own images are created to use the downloaded Node image. You can confirm this by running docker images and seeing the Node image, and another image for each service defined in the Compose file:

The image name is prefixed with the directory of the Compose file. My project sits in a directory called “nextjs-typescript-jwt-boilerplate”, so images are created with this prefix so we can tell which Docker Compose setup this relates to.

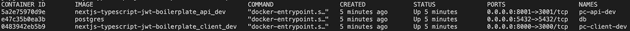

Once the images are created, the containers are then ran from the images we’ve just created - all happening automatically. Again, this can be confirmed by entering docker ps -a to show a list of running containers:

Volumes

At this point, as we’ve not copied over any files from our host machine to create the image, the container only contains what ever Node version we’ve chosen. This is where the Volumes come in. In the client app, the Volumes are defined as ./client:/home/app/client. This means that from our host machine at the location ./client (relative to the docker-compose.yml file) we want to mount this directory in our container at the directory /home/app/client. This is crucial to enable our local development environment, because we want to be able to change files a lot when developing so we can save, test, debug, build etc. so mounting our host machine files to the container that way is perfect. The alternative for this is to COPY the files over to the image, and when saving a file have the whole image re-build and deployed as a fresh container. This has obvious overheads and will take some time to re-build each time.

As well as using Volumes to speed up the dev process for the client_dev and api_dev services, Volumes are also utilised when running the Postgres image. Due to the nature of containers and images as discussed in the last post, data is not persistent. When a container crashes or is closed down, all data within the container is gone, and resets back to the data contained in the original image when the container is created again. Volumes are used with the postgres container to keep a copy of all data on our host machines so it can be persisted when the container is closed down.

Network

As well as having a clear separation between local files and the files contained in the images/containers, it’s important that the containers can communicate with one another. Luckily, with Docker Compose this is all handled automatically. Without Docker Compose for example, containers must be added to a Network, which is done by entering commands in to the CLI. However, a project ran using Docker Compose automatically creates a Network for all containers in the project.

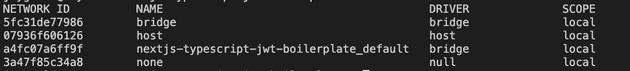

Running docker network ls will show you all the Networks on your host machine:

The Networks defined by Docker Compose are prefixed with the same name as the image and container prefixes. Again, to help show where the Network originates from.

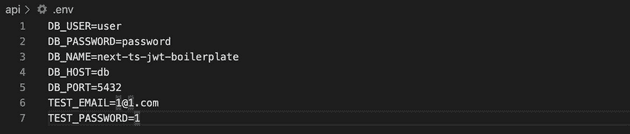

The Network enables containers to communicate in a very convenient way when working with the web. Usually when we make a request from a client side app to a server side API on out local machines, we use the host name “localhost” or IP address “127.0.0.1”. For example, a fetch request may hit an endpoint like https://localhost:3001/api/v1/auth/login. This is because the client and API are both on the same local network. Docker’s Network feature allows us to use the hostname of the containers to facilitate communication. For example, on the boilerplate project in this post, the Express API connects to the Postgres database using the hostname db, which is the name of the service in the docker-compose.yml file:

When sending a request from the client to the server, we continue to use localhost however, as that request is coming from the browser rather than inside a container. The browser will communicate with any container using localhost host name and make use of the port mapping, but communication between each container directly will be required to use the Network feature of Docker.

What about the node_modules?

Some of you may be wondering about what happens with the node_modules directory and generally managing dependencies. With our current setup mentioned above, the dependencies are all installed and used on our local machines. There’s no copying over of files to the image or container, as everything in terms of our source code is mounted using the volumes. This is fine for a very simple setup, but there are some drawbacks.

Firstly, having no dependencies (or any code for that matter) embedded in the image means when someone downloads your image from Docker Hub, it doesn’t contain anything of substance. It’s not a huge problem as the point of this post is to show how Docker can be used for local development, but if publishing your application to Docker Hub is on your to do list, more work needs to be done.

Another problem with mounting all of your code means that the node_modules are actually installed on your host machine, and not inside the image. This might not seem like a big deal (and for most people it actually won’t be), but there are some situations where the host machine and Docker container are running such a different OS that a certain package will install on one ok, but fail on the other one. This has happened to be before with the NPM package bcrypt. This package would install ok on my Mac but not on the container.

To ensure the installation and running process of your app is exactly the same for all users that install it (the whole point of using Docker) is to install the dependencies on the image. So how’s this done?

Improving the setup

The previous setup is fine for basic development between a small team, but there are improvements we can make to tackle the issues mentioned above. We can update the Dockerfile:

# Dockerfile

FROM node:12.12.0-alpine

WORKDIR /home/app/client

COPY package*.json ./ # copy over the package file

RUN npm install # install the dependencies

COPY . . # copy over the rest of the source code

EXPOSE 3000

CMD [ "npm", "start" ] # run the app in productionThe package.json and package-lock.json files are copied over to the image first, and npm install is ran to install the node_modules in to the image. Once installed, the rest of the code is copied over (done in two stages for caching purposes). All this is done at build time, which is triggered when running docker-compose build --no-cache or docker-compose up --build (It’s best to use no-cache sometimes so the image can be completely rebuilt).

The above Dockerfile can also be ran using the

docker build...commands but this post is specifically discussing Docker Compose.

With the Dockerfile above handling the npm install, the Docker Compose file can be used to spin up based on the image which is built using the Dockerfile by running the npm run dev command:

# docker-compose.yml

version: "3"

services:

client_dev:

build: ./client

command: sh -c "npm run dev"

ports:

- 8000:3000

volumes:

- ./client:/home/app/client

- /home/app/client/node_modules

working_dir: /home/app/clientWe then need to define a volume for the node_modules inside the container using the volume as shown above, because without this the dependencies within the container will be overwritten when the rest of the code is copied over to the image. This is pretty crucial otherwise you’ll end up with errors when your code can’t see any dependencies. When you edit the package.json file, you’ll need to re-build the container with docker-compose up --build so the node_modules can be copied over again, but luckily Docker has an excellent caching feature which means it will take way less time that the initial installation.

There’s one problem though…

One deal breaker with this approach is that now all the node_modules are contained in the image, and not in your project structure, meaning the dependencies are not available to your code editor. This is especially bad when using TypeScript as the types can’t be used to help with development, and the code will be littered with those annoying errors on most of your third party functions.

I spent some time looking in to this a while back and found a few solutions but they didn’t quite work (or work how I wanted). There was this solution which makes use of a VS Code extension to allow the development inside the actual container, rather than the file system on the host machine. This seemed like a good idea at the start but it was too fiddly for me, and I don’t like the idea of relying on this for the whole development process. Another way was to keep all dependencies and code on the host machine and don’t copy over to an image at all - which is also what I wanted to avoid ideally as that was a solution at the top of this post. I wanted something which would still allow the creation of a production ready container or set of containers.

I’ve not yet found a good enough solution to this, so my solution in the meantime is to install the dependencies while building the image, running npm install in the Dockerfile, and also installing dependencies locally afterwards. It’s not a great solution, but it’s the easiest way I found to be able to make use of Typescript typings. Alternatively, the Typescript warnings can be turned off and autocompletion won’t be available.

The final solution - development and production

So my end solution is to have a separate Docker Compose process for development and production builds. This would mean for development we’d have out usual docker-compose.yml file:

# docker-compose.yml

version: "3"

services:

client_dev:

build: ./client

command: sh -c "npm run dev"

container_name: pc-client-dev

ports:

- 8000:3000

volumes:

- ./client:/home/app/client

- /home/app/client/node_modules

working_dir: /home/app/client

api_dev:

build: ./api

command: sh -c "npm run migrate-db && npm run dev"

container_name: pc-api-dev

ports:

- 8001:3001

volumes:

- ./api:/home/app/api

- /home/app/api/node_modules

working_dir: /home/app/api

restart: on-failure

depends_on:

- db

[...]This installs dependencies on the container, but runs the app in dev mode when starting with Docker Compose. But as well as running the above, we can also run npm install on our host machine to ensure the dependencies are installed locally for TypeScript includes.

This would use the standard Dockerfile which was used at the start of the post:

# Dockerfile

FROM node:14.4.0-alpine

WORKDIR /home/app/client

COPY package*.json ./

RUN npm install

COPY . .

EXPOSE 3000Now for production we can create a new Docker Compose file called docker-compose.prod.yml:

# docker-compose.prod.yml

version: "3"

services:

client_dev:

build:

context: ./client

dockerfile: Dockerfile.prod

command: sh -c "npm run start"

volumes:

- ./client:/home/app/client

- /home/app/client/node_modules

api_dev:

build:

context: ./api

dockerfile: Dockerfile.prod

command: sh -c "npm run migrate-db && npm run prod"

volumes:

- ./api:/home/app/api

- /home/app/api/node_modulesThis extends the original Compose file, and references a new set of Dockerfiles in the build part of the file. Here’s one for the API for example:

# Dockerfile.prod

FROM node:12.12.0-alpine

WORKDIR /home/app/api

COPY package*.json ./

RUN npm install

COPY . .

EXPOSE 3001

CMD 'npm run prod'We have the added benefit of now being able to run a production command to start our app with npm run prod in the Dockerfile for when the image is build, or in the Docker Compose file for when the build is initiated using the Compose command.

To run in development it’s docker-compose up, and for production it’s docker-compose -f docker-compose.yml -f docker-compose.prod.yml up -d, extending the Compose file and running in detached mode.

Thanks for reading!

Senior Engineer at Haven